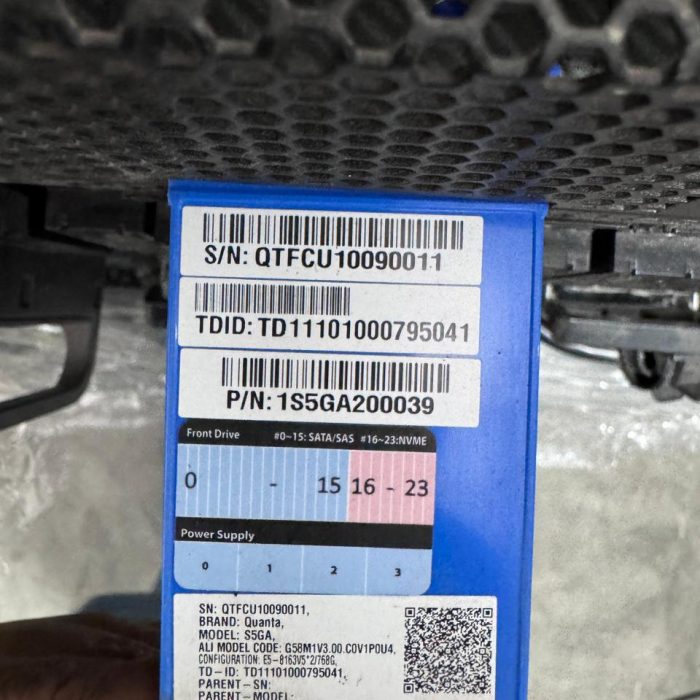

Quanta S5GA AI Server with 8x NVIDIA V100 GPUs WITH 128GB RAM 960GB NVME SSD

The Quanta S5GA is an ultra-dense, $5$U rackmount server specifically engineered as a high-performance GPU computing platform for AI, deep learning, and high-performance computing (HPC) workloads . It is designed to maximize computational throughput by supporting 8x NVIDIA Tesla V100 GPUs, interconnected via high-speed NVIDIA NVLink, within a single server chassis. Powered by dual Intel Xeon Scalable Processors and massive memory capacity, the S5GA offers extreme parallel processing capability and low-latency communication, making it the ideal choice for training large-scale neural networks, complex simulations, and scientific research.

- Estimated Delivery : Up to 4-5 business days

- Free Shipping

- Warranty as specified by seller

- 3 days returns. Buyer pays for return shipping.

The Quanta S5GA is a $5$U, two-socket (2S) rackmount server purpose-built for the most demanding scale-up computational tasks. Its architecture is optimized to deliver peak performance and maximum acceleration, primarily featuring support for 8x NVIDIA Tesla V100 (Volta architecture) Tensor Core GPUs.

Core Architecture & Processing

The server is driven by Dual Intel Xeon Scalable Processors (Skylake/Cascade Lake), offering up to $28$ cores per CPU and maximizing PCIe lane count to feed the $8$ accelerators. Memory is provided by 24 DDR4 DIMM slots, supporting up to $3$ TB of ECC RDIMM/LRDIMM (using $128$GB modules) running at speeds up to $2933$ MHz (depending on the CPU generation). The system uses the Intel C621 chipset for enterprise-grade management and I/O control.

GPU & Interconnect Subsystem (The Engine)

The central feature is the robust GPU complex:

-

GPU Support: 8x Dual-width NVIDIA Tesla V100 GPUs (SXM2 or PCIe form factor, depending on the S5GA variant) with up to $32$GB HBM2 memory per GPU.

-

Interconnect: For the highest performance, the S5GA supports NVIDIA NVLink (via an NVLink Bridge or SXM2 board), providing up to $300$ GB/s of peer-to-peer GPU communication bandwidth?up to $10$x faster than standard PCIe Gen3.

-

PCIe Topology: The system features a complex PCIe fabric, typically using internal PCIe switches (e.g., PLX) to ensure that all $8$ GPUs are connected with dedicated PCIe Gen3 x16 lanes to minimize bottlenecks and ensure the host CPUs can efficiently manage I/O to all accelerators simultaneously.

Storage, Networking, and Power

-

Storage: The S5GA provides flexible storage configurations, including 10-12 hot-swappable 2.5-inch drive bays (supporting SATA/SAS and often NVMe SSDs via U.2) to support high-speed data loading for training datasets.

-

Networking: The system supports high-speed networking essential for clustering and large-scale data transfer, typically including 2x $10$GbE or $25$GbE ports integrated on the board, with multiple PCIe slots available for high-speed Infiniband ($100$/$200$ Gb HDR/EDR) or Omni-Path host channel adapters (HCAs).

-

Power & Cooling: Given the $8$ V100 GPUs alone can consume over $2800$W (8 x $350$W), the server requires multiple high-efficiency, redundant power supplies (e.g., $4+2$ or $3+3$ redundancy) with a combined capacity of $ge 3500$W to maintain stable operation under full load, coupled with an advanced fan-array cooling system to manage the high thermal design power (TDP).

| Weight | 50 kg |

|---|

Only logged in customers who have purchased this product may write a review.

General Inquiries

There are no inquiries yet.

Reviews

There are no reviews yet.